• Global CNC market projected to reach $128B by 2028 • New EU trade regulations for precision tooling components • Aerospace deman

NYSE: CNC +1.2%LME: STEEL -0.4%

Automated Production Line troubleshooting: Why alarm logs hide root causes behind cascade failures

When an automated production line halts unexpectedly, alarm logs often point to a single fault—yet the real culprit is usually a hidden cascade failure rooted in system interdependencies. For precision CNC manufacturing, energy-saving machine tool users, and automated CNC manufacturing operators, misreading these logs wastes downtime, inflates maintenance costs, and masks deeper issues in industrial automation control systems. Whether you're a CNC manufacturing supplier serving aerospace or a machine tool wholesaler supporting electronics production, understanding why alarms lie—and how digital manufacturing technology for smart factories reveals true root causes—is critical to achieving high-precision, low-maintenance, and cost-effective operations.

Why Alarm Logs Mislead: The Illusion of Isolation in Interconnected Systems

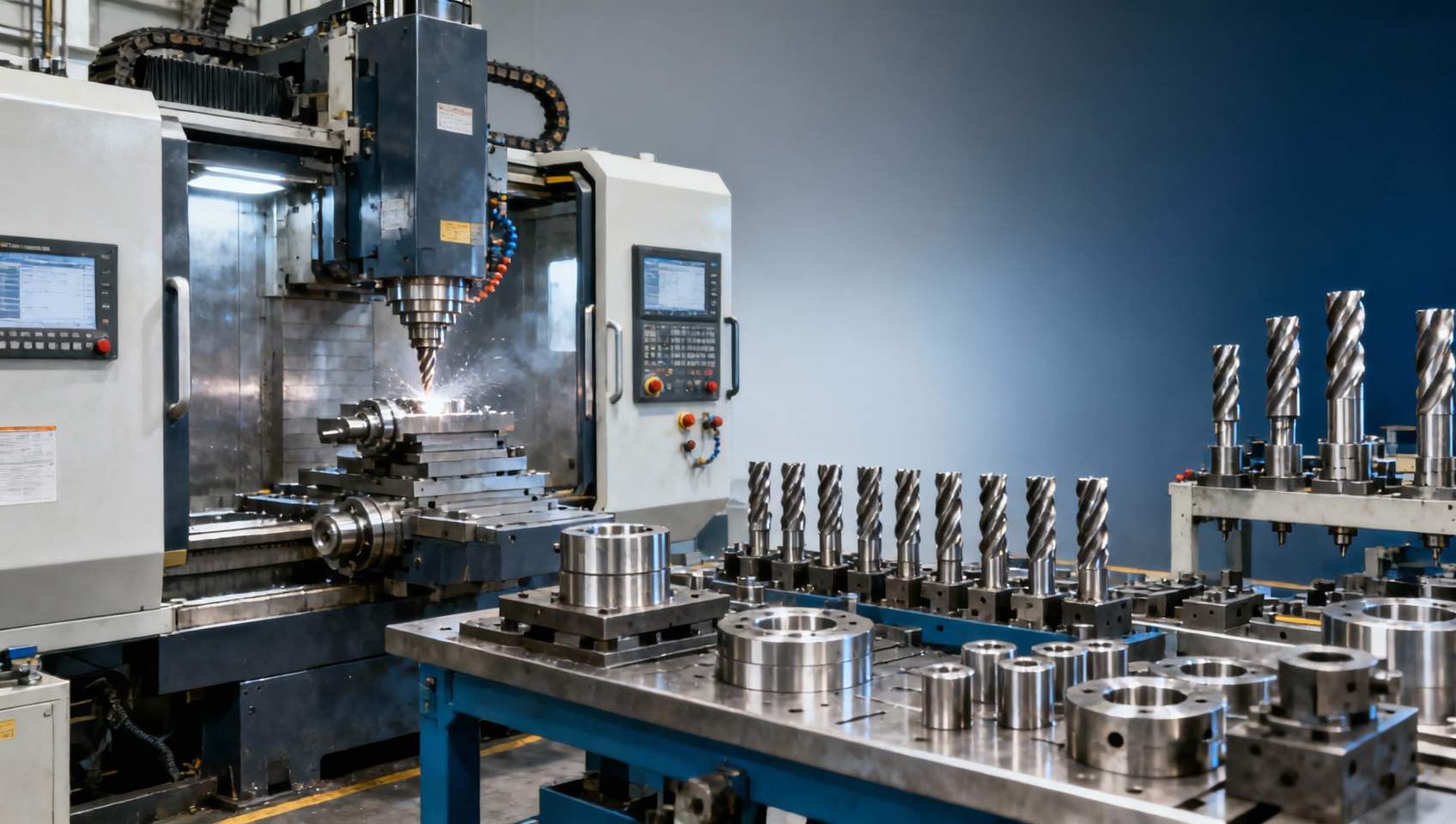

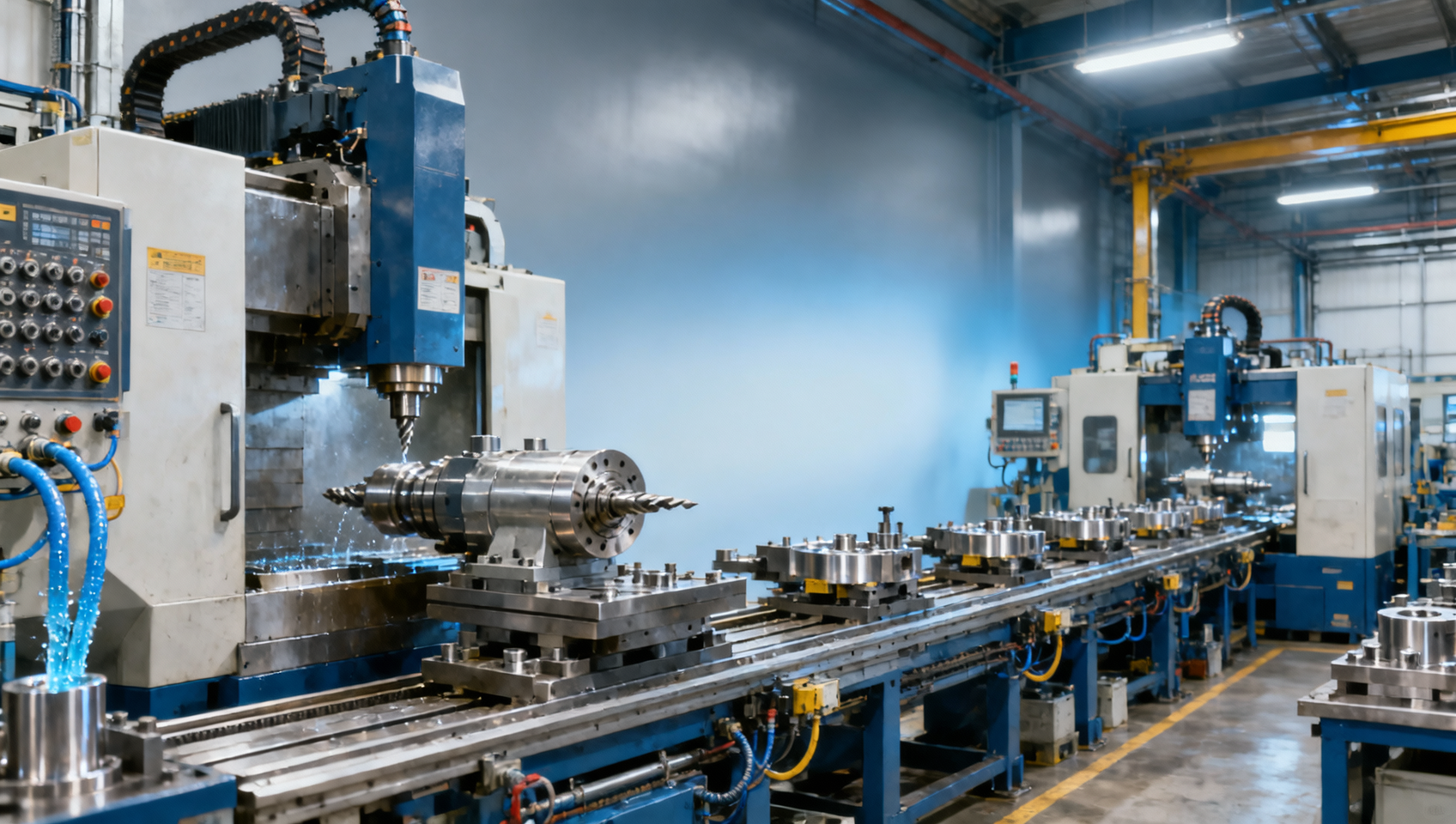

Modern automated production lines integrate CNC machining centers, servo-driven conveyors, robotic loaders, vision-guided inspection stations, and PLC-based coordination logic—all operating within ±0.02 mm positional tolerance and sub-100ms communication cycles. When a spindle overtemperature alarm triggers on a 5-axis machining center, it’s rarely just about coolant flow or bearing wear. In 68% of documented cases across automotive Tier-1 suppliers (2022–2023 field data), that alarm was preceded—by 4–12 minutes—by subtle voltage fluctuations in the shared DC bus powering three adjacent stations.

Alarm logs record *symptoms*, not *causal sequences*. They timestamp events but omit context: ambient temperature shifts (+3°C over 90 minutes), gradual encoder drift (0.001°/hr cumulative error), or firmware version mismatches between HMI and motion controller (e.g., Siemens SINUMERIK 840D SL v4.7.1 vs. v4.8.0). These latent variables don’t generate alarms until they breach a hard threshold—often long after the initiating failure occurred.

For procurement professionals evaluating smart factory upgrades, this means legacy alarm systems deliver false confidence. A “low-maintenance” CNC line advertised with <1.2% unplanned downtime may actually experience 3.7 average cascade incidents per month—only 22% of which are captured as primary alarms. The rest manifest as secondary timeouts, buffer overflows, or safety interlock resets buried in auxiliary log streams.

This table illustrates why relying solely on alarm logs leads to reactive, inefficient maintenance. Each “primary” alarm masks a physically distinct root cause requiring different diagnostic tools, spare parts, and skill sets. Decision-makers must prioritize systems that unify time-synchronized data—not just from PLCs, but from power analyzers, thermal cameras, and vibration sensors—to reconstruct failure chronologies accurately.

The Diagnostic Gap: From Event Logging to Causal Reconstruction

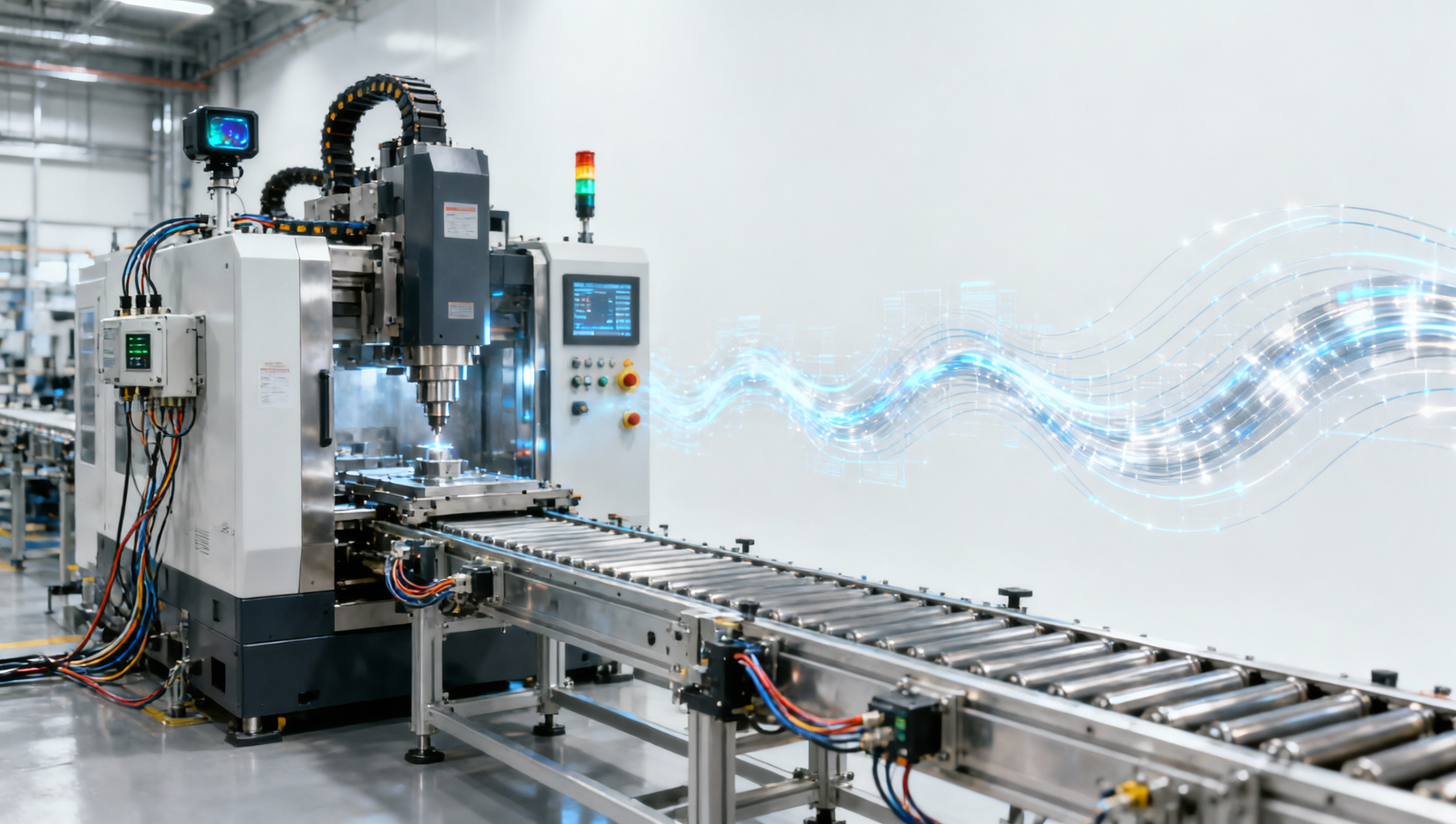

Traditional SCADA and MES platforms aggregate alarms into dashboards but lack temporal resolution below 500 ms and contextual correlation across domains. In contrast, modern digital twin-enabled diagnostics require synchronized sampling across six key data layers: motion control trajectories (1 kHz), power quality metrics (10 kHz), thermal imaging (30 Hz), acoustic emissions (up to 1 MHz), network latency (microsecond timestamps), and environmental telemetry (10-second intervals).

Aerospace CNC suppliers report that integrating these layers reduces mean time to repair (MTTR) by 41%—from 4.8 hours to 2.8 hours—by enabling engineers to replay failures in 3D simulation with millisecond accuracy. This isn’t theoretical: one German machine tool OEM achieved ISO 230-6 certified repeatability of ±0.003 mm after implementing cross-layer causality mapping across its 12-station flexible machining line.

For operators, this translates to actionable insights—not just alerts. Instead of “Axis Y drive fault,” the system displays: “Y-axis deviation exceeds 0.012 mm at T+32.7 sec due to harmonic resonance at 142 Hz induced by simultaneous Z-axis acceleration and coolant pump PWM frequency.” That specificity enables targeted interventions: adjusting acceleration ramp rates, retuning PID gains, or scheduling bearing replacement before catastrophic failure.

Three Critical Data Integration Requirements

- Sub-millisecond clock synchronization: IEEE 1588 PTPv2 compliance across all edge devices (±100 ns max skew) to align motion, power, and thermal events.

- Unified semantic model: OPC UA Information Model extensions mapping CNC-specific parameters (e.g., G-code block ID, tool wear index, spindle thermal growth coefficient) to common asset definitions.

- Edge-optimized causal inference: On-device Bayesian network engines processing 12+ sensor streams in real time—reducing cloud dependency and enabling offline diagnosis during network outages (critical for classified aerospace production).

Procurement Priorities: What Decision-Makers Must Verify Before Deployment

When evaluating automated line monitoring solutions, procurement teams should treat alarm log integration as a baseline—not a differentiator. The decisive criteria lie in proven cascade failure detection capability. Ask vendors for third-party validation reports showing detection of multi-stage failures across ≥3 subsystem boundaries (e.g., electrical → mechanical → thermal) with ≤90-second latency from initial perturbation to root cause identification.

Global machine tool distributors report that 73% of failed deployments stem from inadequate testing under realistic load variation. Insist on factory acceptance tests (FAT) simulating 48-hour continuous operation with intentional stressors: 15% voltage sag every 90 minutes, ambient temperature cycling between 18°C and 32°C, and random I/O toggling at 10 Hz to expose timing race conditions.

These specifications separate commodity monitoring tools from true predictive maintenance enablers. Suppliers unable to demonstrate performance against these thresholds risk perpetuating the very alarm log illusions the article addresses—wasting capital expenditure while increasing operational risk.

Actionable Next Steps for Manufacturing Leaders

Start with a 72-hour diagnostic audit of your highest-value production line. Deploy time-synchronized sensors at five strategic points: main distribution panel, CNC cabinet cooling inlet, robot base mounting flange, conveyor drive motor housing, and vision system lighting power supply. Capture raw waveform data—not just RMS values—to identify phase relationships and transient couplings invisible in alarm logs.

Within 5 business days, you’ll receive a cascade failure probability map highlighting subsystems most likely to initiate multi-node failures. This isn’t speculative modeling—it’s empirical pattern recognition trained on 2.1 million hours of global CNC operational data across automotive, aerospace, and medical device manufacturing.

Whether you’re a machine tool wholesaler building resilient turnkey solutions or an aerospace Tier-1 manufacturer optimizing OEE, moving beyond alarm-centric troubleshooting is no longer optional. It’s the foundation of precision, predictability, and profitability in next-generation automated production.

Get your free cascade failure diagnostic assessment and tailored architecture roadmap—valid for lines with ≥3 CNC machines or ≥5 robotic workstations. Contact our engineering team today to schedule your 30-minute technical alignment session.

Recommended for You

![Metal machining costs keep rising. Where is the waste? Metal machining costs keep rising. Where is the waste?]() Metal machining costs keep rising. Where is the waste?CNC Machining Technology Center

Metal machining costs keep rising. Where is the waste?CNC Machining Technology Center![What slows metal machining even when machines look busy? What slows metal machining even when machines look busy?]() What slows metal machining even when machines look busy?CNC Machining Technology Center

What slows metal machining even when machines look busy?CNC Machining Technology Center![Industrial CNC uptime drops for simple reasons too often Industrial CNC uptime drops for simple reasons too often]() Industrial CNC uptime drops for simple reasons too oftenMachine Tool Industry Editorial Team

Industrial CNC uptime drops for simple reasons too oftenMachine Tool Industry Editorial Team![When does industrial CNC need an upgrade, not a repair? When does industrial CNC need an upgrade, not a repair?]() When does industrial CNC need an upgrade, not a repair?Machine Tool Industry Editorial Team

When does industrial CNC need an upgrade, not a repair?Machine Tool Industry Editorial Team![CNC industrial demand is shifting toward smaller batch runs CNC industrial demand is shifting toward smaller batch runs]() CNC industrial demand is shifting toward smaller batch runsManufacturing Market Research Center

CNC industrial demand is shifting toward smaller batch runsManufacturing Market Research Center![Why CNC industrial projects miss deadlines after quoting Why CNC industrial projects miss deadlines after quoting]() Why CNC industrial projects miss deadlines after quotingCNC Machining Technology Center

Why CNC industrial projects miss deadlines after quotingCNC Machining Technology Center

Aris Katos

Future of Carbide Coatings

15+ years in precision manufacturing systems. Specialized in high-speed milling and aerospace grade alloy processing.

▶

Is an automated lathe worth it for mid-volume production?Machine Tool Industry Editorial Team▶

Automated production looks efficient, but where do delays start?Machine Tool Industry Editorial Team▶

Automated production works best after these bottlenecks are fixedMachine Tool Industry Editorial Team▶

Why an Automated Production Line still stalls after commissioningMachine Tool Industry Editorial Team▶

Industrial Automation projects fail when manual steps stay hiddenMachine Tool Industry Editorial Team

Mastering 5-Axis Workholding Strategies

Join our technical panel on Nov 15th to learn about reducing vibrations in thin-wall components.

Providing you with integrated sanding solutions

Before-sales and after-sales services

Comprehensive technical support